Claude Code Leaked. I Looked Under the Hood.

Claude Code CLI accidentally exposed part of its codebase. I pulled the package and looked under the hood. The direction is clear: AI agents are becoming systems.

There are posts circulating that Claude Code accidentally shipped a map file that exposed a large portion of its codebase. I missed the leak. It's already been patched. I'm not digging through mirrored zip files from anonymous sources to search for secrets.

But it got me thinking. What can we actually find just by pulling the live npm package? So I pulled it and looked under the hood.

The minified CLI bundle for @anthropic-ai/claude-code@2.1.87 is 13 MB. The SDK type definitions alone are 117KB. I extracted string literals, telemetry event names, CLI flags, and API endpoints to map out what's actually implemented versus what's been announced.

What This Shows

The direction is very clear. AI agents are becoming systems. A move from chat and copilots to actual systems that run on their own, respond to events, and coordinate work across multiple agents without anyone sitting at the keyboard.

Most people still think of AI as a conversation. You ask something, it responds, you close the tab. That model is already outdated.

What's actually being built are systems that run on schedules, respond to events like deployments failing or files arriving, and coordinate multiple agents working on different parts of a task at the same time. The interaction model is shifting from pull to push. Instead of you going to AI when you need it, AI runs continuously and acts when something happens.

What That Looks Like Inside a Company

A finance system that builds reports every morning before anyone opens their laptop. A sales system that generates a working prototype environment before every prospect call. An ops system that watches incoming files and structures the data automatically without anyone touching a spreadsheet.

Instead of one person prompting one chat thread, a team of agents coordinates on a codebase. One plans the approach, one writes the code, one reviews it. They share memory. They hand off tasks. This is not hypothetical. This is what the tooling is moving toward.

What I Found in the Code

The CLI already has multi-agent team coordination, cron-style scheduled execution, remote triggers that run agents in the cloud via API, push-based workflows where external systems send events to agents in real time, and a dual-model architecture where one model guides or reviews another. These aren't concepts on a roadmap. They're implemented features with flags, telemetry, and endpoints.

A complete team orchestration framework. A lead agent creates a team, spawns teammates, assigns tasks, and coordinates work. Each agent gets an ID, a name, a color, and a role. They communicate through direct messages and share memory with push/pull sync.

Three execution modes exist: each agent in its own terminal pane, lightweight in-process, or auto where the system decides. The task system supports ownership, blocking, reassignment, and priority ordering. When a teammate finishes a task, it checks the list for the next one. When everything is blocked, the lead gets notified.

Full cron-based scheduling with standard five-field cron expressions. Two persistence modes: durable jobs that survive restarts and session-only jobs that auto-expire after seven days. Built-in jitter to prevent thundering herd problems. Local timezone support and missed execution detection with telemetry tracking.

Cloud-hosted agents that execute on demand via REST API. Full CRUD endpoints for triggers: create, read, update, delete, and run. Combined with local cron, this creates a full autonomous execution platform where tasks fire locally on schedules or remotely via API, without anyone at a terminal.

MCP servers can now act as channels that push events into active sessions. This flips the interaction model entirely. Instead of the agent pulling from tools when asked, external systems push to the agent: a GitHub webhook fires, a CI build fails, a deployment completes. The agent reacts. There's an allowlist system for approved channel servers, and development channels require explicit opt-in with a confirmation dialog.

A secondary model runs alongside the primary one during a session. One model writes code while another reviews it. Or a specialized model provides domain expertise while a general model handles execution. Token usage is tracked separately and the advisor can be interrupted mid-response. The tool definition is dated March 2026, making it very recent.

Ultraplan is a cloud-based planning system that runs server-side with its own model selection and approval workflow. It has eligibility checks, suggesting a premium or enterprise tier. Voice mode adds WebSocket-based speech-to-text with native audio capture binaries for macOS, Linux, and Windows.

File history and rewind provides session-level snapshots independent of git, so you can undo individual operations without touching your working tree. There's a full plugin marketplace with lifecycle management. Auto mode uses an AI classifier to automatically approve or deny tool use, with configurable rules and a built-in critique command. Grove is an enterprise privacy and policy system with organization-level compliance controls.

Other Fun Finds

Beyond the major features, the bundle is full of interesting details that tell you a lot about where this project is headed and how it's being built internally.

There's a whole set of CLI flags hidden from claude --help. These are experimental or internal features that exist in the codebase but aren't documented. The multi-agent flags alone include team names, agent colors, teammate execution modes, and a flag that forces agents to plan before they implement anything.

The CLI has flags marked with .hideHelp() that don't show up in help output. These include --advisor for enabling a secondary model, --enable-auto-mode for automatic tool approval, --brief which hides all output except explicit SendUserMessage calls, --channels for registering MCP push notification servers, and --teleport for resuming cloud sessions locally.

The multi-agent flags are the most extensive: --agent-id, --agent-name, --team-name, --agent-color, --agent-type, --teammate-mode (tmux, in-process, or auto), --parent-session-id, and --plan-mode-required. There's also --sdk-url for WebSocket-based remote streaming in headless mode, and --tmux which uses iTerm2 native panes on macOS when available.

The bundle has hardcoded references to specific model versions including Opus 4.6, Sonnet 4.5, Sonnet 4.0, Sonnet 3.7, and Haiku 4.5. More interesting is the fallback system built around them. If Opus is unavailable, the CLI automatically falls back to an alternative and tracks the event through telemetry.

Specific model IDs include claude-opus-4-6-v1, claude-sonnet-4-5-20250929-v1, claude-sonnet-4-0, claude-3-7-sonnet-20250219-v1, claude-haiku-4-5-20251001, and several older variants. The fallback system is tracked by tengu_api_opus_fallback_triggered for Opus-specific fallbacks and tengu_model_fallback_triggered for general fallbacks. There's also a legacy migration path from older Opus versions and a mechanism to reset Pro tier defaults back to Opus.

Every telemetry event in the bundle is prefixed with tengu_, which appears to be the internal project codename for Claude Code. The scheduling system is codenamed KAIROS. The enterprise privacy system is called Grove. There's even a "Penguin Mode" with org-level controls that looks like a compliance or restricted operation mode. The sheer scope of instrumentation across agents, teams, memory, billing, and performance tells you this is being treated as production infrastructure.

The telemetry covers dedicated event categories for session lifecycle, agent coordination, team memory sync, planning phases, scheduling, tool permissions, auto mode decisions, MCP connections, memory access, streaming performance, context compaction, file history, worktree management, remote sessions, plugins, billing thresholds, and more. Each category has multiple granular events tracking success, failure, and configuration changes.

Context management alone has multiple compaction strategies: auto, partial, smart, and time-based micro-compaction. Cache sharing has its own fallback chain. There are dedicated content block types for compaction and container uploads. This level of optimization around context windows and caching tells you they're operating at a scale where these savings matter.

The Shift

This is a shift from using AI when you need it to AI systems running continuously inside the business. The current model is reactive. You open a chat, ask a question, get an answer. What's being built is persistent. Agents that run on schedules, respond to events, coordinate with other agents, and carry memory across sessions.

We've moved from better chatbots to real infrastructure.

The Gap

Most companies aren't set up for this. Not because of the tools. The tools are already here, implemented in the CLI right now. The gap is that no one owns building these systems. No one is looking at the daily workflows and asking which of these should be running automatically, or which should be reacting to events instead of waiting for someone to remember to check.

Moving fast with AI isn't about better prompts. It's about building systems. Systems that run, react, and improve over time. That requires someone who owns it.

David Johnsen

Founder, CloudBuddy Solutions

Want to automate a workflow in your business?

Start with a free audit to find your highest-value opportunity.

Request workflow mappingMore posts

A Repeatable System Audit Framework for Production Software

A repeatable framework for auditing a SaaS codebase at scale. A set of audit tracks you select and adapt to your system, an invariants loop that stops regressions, and a verification cycle that makes each audit cheaper than the last. One recent application surfaced hundreds of findings and promoted 36 invariants to code-level guardrails.

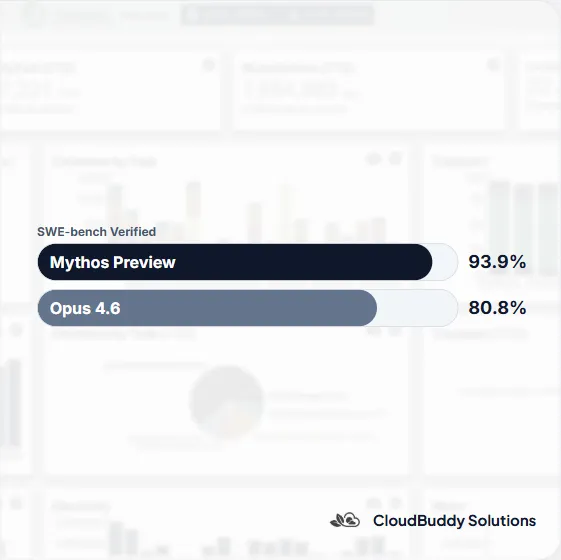

AI Just Took a Leap. Access Is Becoming a New Advantage.

Anthropic released Mythos Preview to a closed group of organizations. The capability leap is real, but the access model may matter just as much. As AI shifts from an equalizer to a gated advantage, the teams that win will be the ones that can turn that capability into working systems.

I Rebuilt My Company Site From My Phone (At the Gym)

What it actually looks like to work with remote AI agents. What worked, what didn't, and how Claude Dispatch compares to our custom AI tools.